RMSE in Regression: Real-World Applications

RMSE, or Root Mean Square Error, is a key metric for evaluating the accuracy of regression models. It measures how far predictions are from actual values, penalizing larger errors more heavily. This makes it especially useful in fields where big mistakes can have serious consequences, like finance, healthcare, and weather forecasting.

Here’s why RMSE stands out:

- Interpretable Units: Results are in the same units as the data (e.g., dollars, degrees).

- Focus on Large Errors: Squaring errors ensures bigger mistakes impact the score more.

- Widely Used Across Industries: From predicting electricity prices to improving healthcare outcomes, RMSE helps refine models.

For example:

- In March 2026, a Random Forest model predicting Texas electricity prices achieved an RMSE of $8.13 per megawatt-hour, aiding revenue planning.

- Retailers have cut inventory costs by 8% using RMSE-based demand forecasting.

- In weather forecasting, models with lower RMSE outperform competitors in predicting precipitation and temperature.

While RMSE is ideal for scenarios where large errors are costly, it’s often compared to other metrics like MAE (Mean Absolute Error) and R-squared. Each metric has its strengths, but RMSE’s balance of interpretability and error sensitivity makes it a popular choice.

Whether you’re fine-tuning financial models, optimizing retail pricing, or improving healthcare predictions, RMSE provides actionable insights for better decision-making.

5 Linear Regression Metrics Demystified RMSE, MAE, and R² Like Never Before Step by Step Guide

RMSE Applications Across Different Industries

RMSE isn't just a theoretical concept - it's a practical tool that helps organizations evaluate and improve the accuracy of their predictions. By placing more weight on larger errors, RMSE allows decision-makers to identify where models excel and where they fall short, especially in scenarios where significant mistakes can have serious consequences. Its usefulness spans a variety of industries, each applying RMSE to solve unique challenges.

RMSE in Weather and Climate Forecasting

In weather prediction, RMSE is a key metric for identifying errors in forecasts related to temperature, precipitation, and extreme weather events. Its focus on larger errors makes it particularly effective in highlighting major forecasting failures, which can impact public safety and operational planning.

For instance, during the 2024–2025 winter season, Winter Science tested its Stratos AI model across 134 ski areas in the western United States, conducting 385,020 individual forecasts. Using URMA (Unrestricted Mesoscale Analysis) as the benchmark, the model achieved an average RMSE of 1.93 for 6-hour precipitation accumulation. This performance surpassed Google DeepMind's WeatherNext 2 (RMSE of 2.06) and the Global Forecast System (RMSE of 2.44).

As Winter Science highlighted, "By testing timing precision at 6‑hour resolution, the analysis evaluates not just whether models can predict the right amount of precipitation, but whether they can predict when it will fall."

In another example, researchers in January 2026 used a neural network calibration model to enhance ECMWF global forecasts in 10 cities across two European countries. Incorporating factors like the Air Quality Index (PM2.5) and MEGI, the hybrid model reduced the RMSE of 3-day temperature forecasts by 13% and 24-hour precipitation forecasts by 45% compared to raw ECMWF data.

Beyond weather, RMSE also plays a significant role in optimizing retail pricing strategies.

RMSE in Retail Price Prediction

In the retail sector, RMSE helps businesses fine-tune pricing strategies by improving the accuracy of product price predictions. This is especially critical in dynamic markets, where even a single pricing error can lead to significant financial losses. By reducing RMSE, retailers can better align prices with demand and store-specific factors.

For example, in October 2024, Michael Aselebe used a Random Forest model to predict the Maximum Retail Price (MRP) for products. By optimizing RMSE, the model's accuracy improved from 77.46% to 93.48%, while the Mean Absolute Percentage Error (MAPE) dropped from 22.54% to 6.52%.

The financial impact of RMSE-optimized forecasting can be substantial. In June 2025, Nicolas Vandeput and the SupChains team demonstrated this through a proof of concept for an international grocery retailer with 150 stores and 500 products. Their optimized model reduced forecasting errors by 33% compared to existing software, potentially saving $172 million for a 10,000-store chain by cutting down on inventory and waste. On average, annual holding and waste costs for a retail store are estimated at 13% of inventory value.

RMSE's importance extends even further in healthcare, where the stakes are often life and death.

RMSE in Healthcare and Biomedical Analysis

In healthcare, RMSE is crucial for evaluating regression models that predict outcomes like recovery times, infection rates, and medical costs. Its ability to express errors in familiar units - such as days or dollars - makes it especially useful for clinicians. Additionally, its sensitivity to larger errors is vital in medical scenarios where significant mistakes, such as incorrect drug dosages or inaccurate recovery estimates, can pose serious risks.

As Coralogix explains, "A lower RMSE is indicative of a better fit for the data."

Sarah Lee from Number Analytics adds, "RMSE penalizes large deviations more than Mean Absolute Error (MAE), making it ideal when big misses are unacceptable."

How RMSE Improves Automated Casting Role Matching

In automated casting, platforms like CastmeNow use AI-powered regression models to assign fit scores (e.g., "80% Fit") that estimate how well an actor aligns with a specific role. These scores are based on factors like the actor's profile, preferences, and past successes. RMSE (Root Mean Square Error) plays a crucial role here by measuring the difference between predicted fit scores and actual results, which are validated through outcomes such as audition requests and bookings. This application of RMSE highlights its usefulness beyond its traditional applications.

Using RMSE to Improve Role Fit Predictions

RMSE's sensitivity to larger errors makes it especially helpful in ensuring high accuracy for casting predictions - particularly when a highly rated match turns out to be inaccurate. By squaring the errors, RMSE prioritizes avoiding significant mismatches rather than focusing solely on average error reduction. Platforms like CastmeNow refine their AI models using data from successful outcomes, such as bookings and auditions, to enhance future predictions. Monitoring RMSE after deployment allows the platform to identify and address performance drops caused by shifts in data patterns. A lower RMSE value signals a more accurate model, leading to better role matching.

This improved accuracy doesn't just enhance casting results - it also drastically simplifies the actors' experience.

How RMSE-Driven Models Save Actors Time

Reducing RMSE improves submission relevance by cutting down on "false positives" - roles that seem like a match but aren’t. This precision translates to significant time savings for actors. For example, in November 2025, actor Moses Jackson used CastmeNow to automate his role submissions. Over two weeks, the system submitted him for 484 roles, resulting in 19 auditions and 2 confirmed bookings. Jackson shared that automation allowed him to focus on being on set instead of manually searching for roles.

Another example comes from December 2025, when actor Ki'mari Lavender booked a role in New York City within 24 hours of joining the platform. The AI-driven system submitted her to hundreds of roles in under a month - a massive jump from her previous manual rate of 25–30 submissions per month. Many actors report cutting their daily submission time from 2–3 hours to just 10–30 minutes, all while securing 10× more auditions.

RMSE Compared to Other Performance Metrics

RMSE vs MAE vs MSE vs R-squared: Regression Metrics Comparison

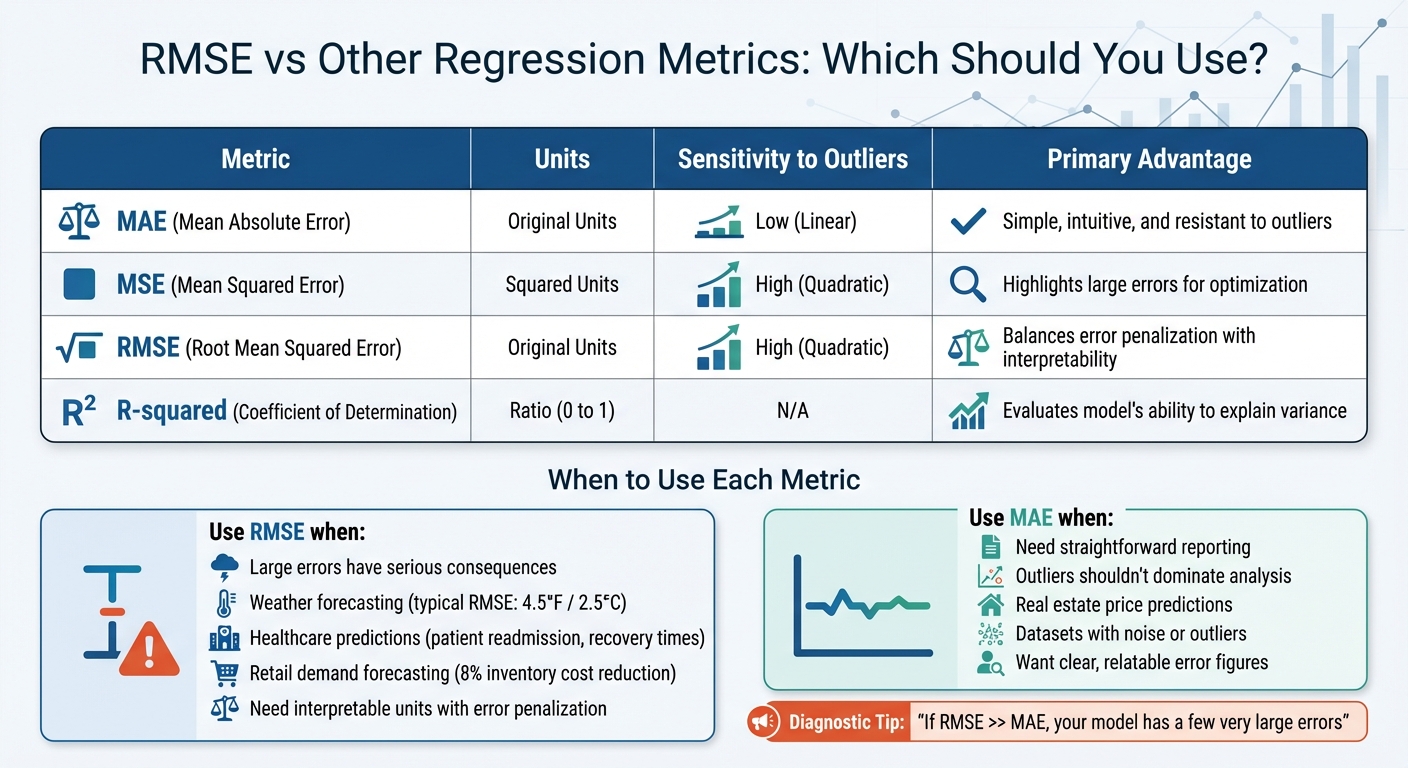

When working with regression models, it's essential to understand how RMSE compares with other performance metrics. While RMSE is a popular choice for assessing prediction accuracy, metrics like Mean Absolute Error (MAE), Mean Squared Error (MSE), and R-squared each offer unique insights. Knowing these differences can help you select the best metric for your specific use case.

Differences Between RMSE and Other Metrics

The key distinction among these metrics lies in how they handle errors. MAE calculates the average absolute difference between predicted and actual values, treating all errors equally. This makes it straightforward to interpret, as it's expressed in the same units as the data (e.g., dollars, degrees). It's also less influenced by outliers, which can be a significant advantage in noisy datasets.

MSE, on the other hand, squares the errors before averaging. This approach amplifies the impact of large errors, making it useful for scenarios where such errors need to be heavily penalized. However, its squared units (e.g., dollars²) can be harder to explain to non-technical stakeholders.

RMSE adds another layer by taking the square root of MSE, which converts the metric back into the original units. This makes it easier to interpret while still penalizing larger errors more than smaller ones. As Ayo Akinkugbe points out, "RMSE is one of the most popular regression metrics and is often the default choice in many applications". However, this sensitivity to large errors means that outliers can disproportionately affect RMSE, potentially exaggerating their impact compared to MAE.

R-squared, meanwhile, measures an entirely different aspect of model performance. It reflects the proportion of variance in the data that the model explains, ranging from 0 to 1. For instance, an R² of 0.7 indicates the model accounts for 70% of the variability in the dataset. However, R² doesn't provide information about the magnitude of prediction errors, focusing instead on overall model fit.

| Metric | Units | Sensitivity to Outliers | Primary Advantage |

|---|---|---|---|

| MAE | Original Units | Low (Linear) | Simple, intuitive, and resistant to outliers |

| MSE | Squared Units | High (Quadratic) | Highlights large errors for optimization |

| RMSE | Original Units | High (Quadratic) | Balances error penalization with interpretability |

| R-squared | Ratio (0 to 1) | N/A | Evaluates model's ability to explain variance |

Understanding these differences makes it easier to determine when RMSE - or another metric - is the right choice for your needs.

When to Use RMSE vs. Alternative Metrics

RMSE is ideal when large errors carry significant consequences. For example, in weather forecasting, where precision is critical, RMSE's sensitivity helps highlight major inaccuracies. A typical RMSE of around 4.5°F (approximately 2.5°C) in temperature predictions shows that while most forecasts are close, a few large misses significantly influence the metric due to its quadratic penalty. Similarly, in healthcare, RMSE is crucial for models predicting patient readmission risks or recovery times, as large errors can lead to resource mismanagement or compromised care. Retailers also benefit from RMSE in demand forecasting, with some achieving an 8% reduction in inventory costs through RMSE-optimized tuning.

If your focus is on straightforward reporting or when outliers shouldn't dominate the analysis, MAE is a better choice. For instance, real estate price predictions often rely on MAE because it provides a clear, relatable error figure in dollars. Additionally, MAE's resistance to occasional spikes in data makes it an excellent option for datasets with noise or outliers.

A helpful diagnostic is to compare RMSE and MAE values. If RMSE is significantly larger than MAE, it indicates that your model is producing a few very large errors. This insight can guide further refinement of your model or data preprocessing steps.

Conclusion: The Role of RMSE in Regression and Beyond

RMSE stands out as a key metric for assessing regression models across various fields - be it weather forecasting, retail, healthcare, or automated casting platforms. Its ability to heavily penalize large errors makes it especially useful in scenarios where inaccuracies can lead to serious consequences.

As Or Jacobi, Senior Software Engineer at Coralogix, explains, "A lower RMSE is indicative of a better fit for the data".

One of RMSE's greatest strengths is its interpretability. Unlike MSE, RMSE presents results in the same units as the original data - whether that's dollars, degrees Fahrenheit, or even match scores. This makes it easier for both technical teams and business stakeholders to grasp the average size of prediction errors without needing additional explanation. This clarity is a big reason why RMSE is so widely used.

For platforms like CastmeNow, RMSE-driven models are essential for connecting actors with roles that truly fit their profiles. By reducing large prediction errors in role-matching algorithms, the platform helps actors focus on opportunities that align with their skills and experience. This not only saves time but also enhances career outcomes by minimizing mismatched applications. It's a clear example of how RMSE can have a direct, positive impact.

Across industries like weather forecasting, retail, and healthcare, RMSE continues to be a trusted tool for improving model performance. Many teams now rely on RMSE for detecting concept drift - when a model's accuracy declines due to changes in data patterns. It's also a go-to metric in automated machine learning platforms, helping with hyperparameter tuning and selecting the best models to ensure consistent, reliable predictions.

As Brandon Wohlwend, Mathematician and Software Engineer, aptly notes: "Evaluation metrics serve as our north star, guiding us towards effective and reliable models".

Whether you're predicting demand, estimating patient outcomes, or matching talent to roles, RMSE remains a dependable benchmark for making informed decisions.

FAQs

What’s a “good” RMSE for my regression model?

When evaluating RMSE (Root Mean Square Error), a "good" value is generally considered to be less than 5% of the range of the target variable. An RMSE under 10% is often deemed acceptable in many cases. That said, these thresholds can differ significantly depending on the industry and the characteristics of the dataset being analyzed.

When should I use RMSE instead of MAE or R-squared?

When you need to emphasize larger errors and want the errors expressed in the same units as your response variable, RMSE (Root Mean Square Error) is a great choice. It’s particularly helpful for assessing the overall size of prediction errors in regression models.

How can I reduce RMSE without overfitting?

Reducing RMSE (Root Mean Squared Error) without overfitting requires a balance between improving model performance and ensuring it generalizes well to unseen data. Here are some effective strategies to achieve this:

- Handle outliers and missing values: Clean your dataset by addressing outliers and filling in or removing missing data to reduce noise in the training process.

- Use regularization techniques: Methods like Lasso and Ridge regression help prevent overfitting by penalizing overly complex models.

- Simplify the model: Avoid unnecessarily complex algorithms or features that might lead to overfitting. A simpler model often generalizes better.

- Apply cross-validation with early stopping: Cross-validation ensures the model performs consistently across different data splits, while early stopping prevents overtraining on the dataset.

- Increase training data size: More data can help the model learn broader patterns, reducing the risk of overfitting while improving accuracy.

By combining these approaches, you can effectively lower RMSE while maintaining reliable predictive performance.